Jobs

December 2024 - Today

- Solution Engineer, alfatraining Bildungszentrum GmbHBringing edge-ai solutions to products of the alfa corporate group, i.e., the alfaview video conferencing software.

January 2023 - November 2024

- Managing Director, Semanux GmbHDo more. Do it your way.WebSuccessful launch of the first product "Semanux Access", a software that enables computer control with alternative inputs means like head tracking, facial expressions, non-verbal speech, buttons, and controllers.Founding Date: 11th January 2023

September 2021 - July 2023

- Project Lead, University of Stuttgart, Institute for Artificial IntelligenceSemanux EXIST ProjectWebSemanux combines hands-free interaction with artificial intelligence to make the Web inclusive for more people.Operating Time: September 2021 - February 2023Funding: EXIST Transfer of Research

April 2021 - August 2021

- Scientific Employee, University of Stuttgart, Institute for Artificial IntelligenceUDeco Research ProjectWebUser-friendliness is fundamental for the success of Web applications. User studies help identify problems with Web sites. However, the evaluation of studies by usability experts is time-consuming, highly subjective and so difficult to understand that user studies are often skipped. Hence, many systems remain hard to use. We develop UDeco, a platform for a usability data ecosystem, that collects and processes usability data and knowledge from user studies in order to automate evaluation of usability studies by means of machine learning and data mining.Partner: EYEVIDO GmbHOperating Time: January 2021 - December 2022Funding: KMU-innovativ program by the Federal Ministry of Education and Research of Germany

December 2016 - March 2021

- Project Lead, University of Koblenz, Institute for Web Science and TechnologiesThe aim of the research project GazeMining is to capture Web sessions semantically and thus obtain a complete picture of visual content, perception and interaction. The log streams of usability tests are evaluated using data mining. The analysis and interpretability of the data collected in this way is made possible by a user-friendly presentation, semi-automatic and automatic analysis procedures.Partner: EYEVIDO GmbHOperating Time: January 2018 - August 2020Funding: KMU-innovativ program by the Federal Ministry of Education and Research of Germany

- Scientific Employee, University of Koblenz, Institute for Web Science and TechnologiesMAMEM's goal is to integrate these people back into society by increasing their potential for communication and exchange in leisure (e.g. social networks) and non-leisure context (e.g. workplace). In this direction, MAMEM delivers the technology to enable interface channels that can be controlled through eye-movements and mental commands. This is accomplished by extending the core API of current operating systems with advanced function calls, appropriate for accessing the signals captured by an eye-tracker, an EEGrecorder and bio-measurement sensors. Then, pattern recognition and tracking algorithms are employed to jointly translate these signals into meaningful control and enable a set of novel paradigms for multimodal interaction. These paradigms will allow for low- (e.g., move a mouse), meso- (e.g., tick a box) and high-level (e.g., select n-out-of-m items) control of interface applications through eyes and mind. A set of persuasive design principles together with profiles modeling the users (dis-)abilities will be also employed for designing adapted interfaces for disabled. MAMEM will engage three different cohorts of disabled (i.e. Parkinson's disease, muscular disorders, and tetraplegia) that will be asked to test a set of prototype applications dealing with multimedia authoring and management. MAMEM's final objective is to assess the impact of this technology in making these people more socially integrated by, for instance, becoming more active in sharing content through social networks and communicating with their friends and family.Partners: CERTH - Centre for Research & Technology Hellas, EB Neuro S.p.A (EBN), SMI GmbH, Eindhoven University of Technology (TUe), Muscular Dystrophy Association (MDA) Hellas, Auth - School of Medicine, and Sheba Medical Center (SMC)Operating Time: May 2015 - July 2018Funding: EU Project Horizon 2020 - The EU Framework Programme for Research and Innovation

March 2014 - November 2016

- Student Assistant, University of Koblenz, Institute for Web Science and TechnologiesSoftware development in Java and C++

November 2013 - February 2015

- Student Assistant, University of Koblenz, Institute for Web Science and TechnologiesCorrections of Algorithms and Datastructures assignments

Academia

February 2021

- Dr. rer. nat., University of Koblenz, Institute for Web Science and TechnologiesGrade: magna cum laude (very good)Improving Usability and Accessibility of the Web with Eye Tracking PDFThe Web is an essential component of moving our society to the digital age. We use it for communication, shopping, and doing our work. Most user interaction in the Web happens with Web page interfaces. Thus, the usability and accessibility of Web page interfaces are relevant areas of research to make the Web more useful. Eye tracking is a tool that can be helpful in both areas, performing usability testing and improving accessibility. It can be used to understand users' attention on Web pages and to support usability experts in their decision-making process. Moreover, eye tracking can be used as an input method to control an interface. This is especially useful for people with motor impairment, who cannot use traditional input devices like mouse and keyboard. However, interfaces on Web pages become more and more complex due to dynamics, i.e., changing contents like animated menus and photo carousels. We need general approaches to comprehend dynamics on Web pages, allowing for efficient usability analysis and enjoyable interaction with eye tracking. In the first part of this thesis, we report our work on improving gaze-based analysis of dynamic Web pages. Eye tracking can be used to collect the gaze signals of users, who browse a Web site and its pages. The gaze signals show a usability expert what parts in the Web page interface have been read, glanced at, or skipped. The aggregation of gaze signals allows a usability expert insight into the users' attention on a high-level, before looking into individual behavior. For this, all gaze signals must be aligned to the interface as experienced by the users. However, the user experience is heavily influenced by changing contents, as these may cover a substantial portion of the screen. We delineate unique states in Web page interfaces including changing contents, such that gaze signals from multiple users can be aggregated correctly. In the second part of this thesis, we report our work on improving the gaze-based interaction with dynamic Web pages. Eye tracking can be used to retrieve gaze signals while a user operates a computer. The gaze signals may be interpreted as input controlling an interface. Nowadays, eye tracking as an input method is mostly used to emulate mouse and keyboard functionality, hindering an enjoyable user experience. There exist a few Web browser prototypes that directly interpret gaze signals for control, but they do not work on dynamic Web pages. We have developed a method to extract interaction elements like hyperlinks and text inputs efficiently on Web pages, including changing contents. We adapt the interaction with those elements for eye tracking as the input method, such that a user can conveniently browse the Web hands-free. Both parts of this thesis conclude with user-centered evaluations of our methods, assessing the improvements in the user experience for usability experts and people with motor impairment, respectively.Examiner and Supervisor: Prof. Dr. Steffen StaabFurther Examiners: Prof. Dr. Andreas Bulling and Prof. Dr.-Ing. Dietrich PaulusChair of PhD Board: Prof. Dr. Jan JürjensChair of PhD Commission: Prof. Dr. Harald F.O. von Korflesch

October 2016

- Master of Science, University of Koblenz, Computational Visualistics ProgrammeOverall Grade: 1.1 (very good)Grade: 1.0 (very good)The surface of a molecule holds important information about the interaction behavior with other molecules. Amino acid residues with different properties change their position within the molecule over time. Some rise up to the surface and contribute to potential bindings. Other descent back into the molecular structure. Surface extraction algorithms are discussed and for the most appropriate one a highly parallel implementation is proposed. Layers of atoms are extracted by an iterative application of the algorithm. This allows one to track residues in their movement within the molecule in respect to their distance to the surface or core. Sampling of the surface is utilized for approximations of further values of interest, like surface area. Novel visualization methods are presented to support scientists in inspection of simulated molecule foldings. Atoms are colored according to their movement activity or an arbitrary group of atoms can be highlighted and analyzed. Proximity of residues to surface or core can be calculated over simulation time and allow conclusions about their contribution.Supervisors: Prof. Dr. Stefan Müller and Nils Lichtenberg, M.Sc.

March 2014

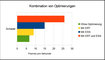

- Bachelor of Science, University of Koblenz, Computational Visualistics ProgrammeOverall Grade: 1.4 (very good)Grade: 1.0 (very good)This thesis covers the mathematical background of ray-casting as well as an exemplary implementation on graphics processing units, using a modern programming interface. The implementation is embedded within an editor, which enables the user to activate optimizations of the algorithm. Techniques like transfer functions and local illumination are available for a more realistic visualization of materials. Moreover, the user interface gives access to features like importing volumes, let one define a custom transfer function, holds controls to adjust parameters of rendering and allows to activate further techniques, which are also subject of discussion in this thesis. Benefit of all shown techniques is measured, whether it is expected to be visual or on the part of performance.Supervisors: Prof. Dr. Stefan Müller and Gerrit Lochmann, M.Sc.

March 2011

- Abitur, Wilhelm-Hofmann-Gymnasium, St.GoarshausenGeneral qualification for university entrance. Main subjects were Physics, Maths, and English.Overall Grade: 1.5 (very good)

Teaching

2016 - 2024

- Thesis Supervisor, University of Stuttgart, Institute for Artificial IntelligenceMaster Thesis: Exploring the dynamics of head pointingDatasetStudent: Georgii GazievOther Supervisors: Steffen Staab and Mathias Niepert

- Lecturer, University of Koblenz, Institute for Web Science and TechnologiesMachine Learning and Data MiningWebThe course Machine Learning and Data Mining (MLDM) covers the fundamentals and basics of machine learning and data mining. The course provides an overview of a variety of MLDM topics and related areas such as clustering and classification.Collaborators: Zeyd Boukhers and Tjitze Rienstra

- Lecturer, University of Koblenz, Institute for Web Science and TechnologiesProseminar "Eye Tracking" (German)WebOther Supervisors: Matthias Thimm

- Tutor, University of Koblenz, Institute for Web Science and TechnologiesThe master programme course Machine Learning and Data Mining covers the fundamentals and basics of machine learning and data mining. The course provides an overview of a variety of MLDM topics and related areas such as optimization and deep learning.Lecturers: Steffen Staab and Zeyd BoukhersCollaborators: Qusai Ramadan, Akram Sadat Hosseini, and Mahdi Bohlouli

- Supervisor, University of Koblenz, Institute for Web Science and TechnologiesResearch Lab: Eye Tracking in Word ProcessingWebExploring the future of word processing with eye tracking.Other Supervisors: Matthias Thimm

- Supervisor, University of Koblenz, Institute for Web Science and TechnologiesThe platform was created during a research lab project that set the goal to find new ways of visualizing eye tracking data.Other Supervisors: Chandan Kumar

- Supervisor, University of Koblenz, Institute for Web Science and TechnologiesResearch Lab: GazeTheWeb - WatchGitHubYouTube application controlled with gaze and processing of gaze and EEG sensor data, part of the EU-funded research project MAMEM.Other Supervisors: Chandan Kumar and Korok Sengupta

- Supervisor, University of Koblenz, Institute for Web Science and TechnologiesResearch Lab: GazeTheWeb - TweetGitHubTwitter application controlled with gaze, part of the EU-funded research project MAMEM.Other Supervisors: Chandan Kumar and Korok Sengupta

- Thesis Supervisor, University of Koblenz, Institute for Web Science and TechnologiesStudent: Hanadi TamimiOther Supervisors: Steffen Staab and Christoph SchaeferBachelor Thesis: Visualization of Transitions on Web Sites for Usability Studies with Eye Tracking (German)PDFGitHubStudent: Christian BrozmannOther Supervisors: Steffen StaabStudent: Christopher DreideOther Supervisors: Steffen StaabBachelor Thesis: Shot Detection in Screencasts of Web Browsing with Convolutional Neural NetworksPDFGitHubStudent: Daniel VossenOther Supervisors: Steffen StaabBachelor Thesis: Semantical Classification of IconsStudent: Pierre KrapfOther Supervisors: Steffen Staab

2012 - 2015

- Tutor, University of Koblenz, Institute of Arts, Digital Media GroupCreation of games using the Blender Game Engine.

- Tutor, University of Koblenz, Institute of Arts, Digital Media GroupIntroduction to Unreal Development Kit (German) SlidesLevel building, materials, visual programming, and particles.

- Tutor, University of Koblenz, Institute of Arts, Digital Media GroupModeling, sculpting, painting, and animation for games.

Software

Today

- C++OpenGLJavaScriptChromium Embedded FrameworkGoogle FirebaseGaze-controlled Web browser, part of the EU-funded research project MAMEM. GazeTheWeb effectively supports all common browsing operations like search, navigation and bookmarks. GazeTheWeb is based on a Chromium powered framework,comprising Web extraction to classify interactive elements, and application of gaze interaction paradigms to represent these elements.Collaborators: Daniel Müller, Christopher Dreide, Chandan Kumar, and Steffen Staab3rd place at Digital Imagination Challenge

- JekyllJavaScriptDockerPythonHomepage of the Institute for Web Science and Technologies at the University of Koblenz. The entire content is organized in a Git repository as markdown and HTML code. A push to the master branch triggers a continuous integration pipeline, which executes the Jekyll Page Generator within a Docker container and deploys the generated HTML pages to the Web server.Collaborators: Philipp Töws, Adrian Skubella, Danienne Wete, and Daniel Janke

2020

- Visual Stimuli DiscoveryGitHubC++PythonJavaScriptOpenCVsklearnTesseractShogun MLQtThe framework of visual stimuli discovery contains the tools and scrips required to process video and interaction recordings into stimulus shots and visual stimuli.Collaborators: Christoph Schaefer

2018

- C++OpenGLPortAudioFreeType 2User interface library for eye-tracking input using C++11 and OpenGL 3.3. eyeGUI supports the development of interactive eye-controlled applications with many significant aspects, like rendering, layout, dynamic modification of content, support of graphics, and animation.

2016

- Voxel Cone TracingGitHubC++OpenGLCUDAFinal project for the course ‘Realtime Rendering’. A polygonial scene is voxelized in real-time through geometry and pixel shading. The voxel grid is transferred to CUDA, where Voxel Cone Tracing is implemented to compute ambient occlusion and global illuminatin. My part of the project was the efficient voxelization of the scene.Collaborators: Fabian Meyer, Milan Dilberovic, and Nils Höhner

2015

- Beer HeaterGitHubC++OpenGLCompute ShadersProject about simulating air flow and heat distribution for the course ‘Animation and Simulation’ at the University of Koblenz in the summer term 2015. The simulation is executed with highly parallel compute shader computations.Collaborators: Nils Höhner

- JavaJMonkeyBlenderSchau genau! was designed for the State Horticultural Show Landau 2015 as arcarde box game, using only gaze and one buzzer as input. Nearly 3000 sessions were played during the summer without any downtime. I have used Java and the jMonkey engine as programming framework and Blender to create the assets.Collaborators: Kevin Schmidt

2014

- VoracaGitHubC++OpenGLVersatile tool to visualize volumes with GPU ray-casting. I have written the tool as part of my bachelor thesis. It allows to load abitrary volume data sets with density information. A transferfunction can be adjusted to map density values to color, opacity, and shading attributes like specular reflection. The ray-casting has been written as pixel shader and improved through stochastic jitterin, early ray termination, and empty space skipping.

Arts

2011 - 2016

- Artist, University of Koblenz, Institute of Arts, Digital Media GroupWe are proud to present our short film "Voxelmania. Effects of Videogames on Service Robots". The main actor is LISA. She is our Star and also an award winning service robot in different international contests (supported by team homer@university koblenz-landau, campus Koblenz). In a crossover of dream and reality LISA is exploring the world of video games.Course: Open space course of summer term 2016 by Markus LohoffCollaborators: Julien Rodewald, Markus Lohoff, and 15 further students

- Artist, University of Koblenz, Institute of Computational Visualistics, Computer Graphics GroupShip happens! - A 3ds Max Movie VideoSmall lighthouse is short of sleep...Course: 3ds Max course of winter term 2013/2014 by Sebastian PohlCollaborators: Adrian Derstroff, Raphael Heidrich, Dominik Cremer and Saner Demirel

- Artist, University of Koblenz, Institute of Arts, Digital Media GroupWhat are the benefits for visual perception that having two eyes brings with it? Which sensations can be artificially generated? To find out, it's two robots task to work through an experimental series that highlights some aspects of the problem.Course: Aspects of image design of summer term 2011 by Markus LohoffCollaborators: Arend Buchacher and Michael Taenzer

2008 - 2014

- Artist, Personal ActivityLittle creatures need your help! Save them by kicking them into the lit hole and make use of the environment to use less kicks. Each kick increases the inner pressure of the creature: One kick over limit and it explodes like a balloon filled with too much hot air.Collaborators: Andre Taulien and Michael Taenzer10.000 downloads in Windows Phone Store

- Artist, Personal ActivityBeyond Jupiter - A role-playing hack and slash game Web Game DesignCollaborators: Andre Taulien

Publications

I have published my research in international conferences and journals, like ACM ETRA and ACM WWW, ACM ToCHI, and ACM TWeb. I have also reviewed submissions for ACM ETRA, ACM UIST, and ACM CHI.

2025

- Menges, R., Staab, S., Schaefer, C., Walber, T., and Kumar, C. 2025. What Did My Users Experience? Discovering Visual Stimuli on Graphical User Interfaces of the Web. ACM Trans. Web 19, 2.DOIBibTeX

2023

- Hedeshy, R., Menges, R., and Staab, S. 2023. CNVVE: Dataset and Benchmark for Classifying Non-verbal Voice. Proceedings of INTERSPEECH 2023, 1553–1557.PDFDOIBibTeX

2021

- Hedeshy, R., Kumar, C., Menges, R., and Staab, S. 2021. Hummer: Text Entry by Gaze and Hum. Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, Association for Computing Machinery.PDFDOIISBNVideoBibTeX

2020

- Hedeshy, R., Kumar, C., Menges, R., and Staab, S. 2020. GIUPlayer: A Gaze Immersive YouTube Player Enabling Eye Control and Attention Analysis. ACM Symposium on Eye Tracking Research and Applications, Association for Computing Machinery.PDFDOIISBNBibTeX

- Kumar, C., Menges, R., Sengupta, K., and Staab, S. 2020. Eye tracking for Interaction: Evaluation Methods. In: S. Nikolopoulos, C. Kumar and I. Kompatsiaris, eds., Signal Processing to Drive Human-Computer Interaction: EEG and eye-controlled interfaces. Institution of Engineering and Technology, 117–144.DOIISBNBibTeX

- Menges, R., Kumar, C., and Staab, S. 2020. Eye tracking for Interaction: Adapting Multimedia Interfaces. In: S. Nikolopoulos, C. Kumar and I. Kompatsiaris, eds., Signal Processing to Drive Human-Computer Interaction: EEG and eye-controlled interfaces. Institution of Engineering and Technology, 83–116.DOIISBNBibTeX

- Menges, R., Kramer, S., Hill, S., Nisslmueller, M., Kumar, C., and Staab, S. 2020. A Visualization Tool for Eye Tracking Data Analysis in the Web. ACM Symposium on Eye Tracking Research and Applications, Association for Computing Machinery.PDFDOIISBNBibTeX

2019

- Menges, R., Kumar, C., and Staab, S. 2019. Improving User Experience of Eye Tracking-Based Interaction: Introspecting and Adapting Interfaces. ACM Trans. Comput.-Hum. Interact. 26, 6, 37:1–37:46.Eye tracking systems have greatly improved in recent years, being a viable and affordable option as digital communication channel, especially for people lacking fine motor skills. Using eye tracking as an input method is challenging due to accuracy and ambiguity issues, and therefore research in eye gaze interaction is mainly focused on better pointing and typing methods. However, these methods eventually need to be assimilated to enable users to control application interfaces. A common approach to employ eye tracking for controlling application interfaces is to emulate mouse and keyboard functionality. We argue that the emulation approach incurs unnecessary interaction and visual overhead for users, aggravating the entire experience of gaze-based computer access. We discuss how the knowledge about the interface semantics can help reducing the interaction and visual overhead to improve the user experience. Thus, we propose the efficient introspection of interfaces to retrieve the interface semantics and adapt the interaction with eye gaze. We have developed a Web browser, GazeTheWeb, that introspects Web page interfaces and adapts both the browser interface and the interaction elements on Web pages for gaze input. In a summative lab study with 20 participants, GazeTheWeb allowed the participants to accomplish information search and browsing tasks significantly faster than an emulation approach. Additional feasibility tests of GazeTheWeb in lab and home environment showcase its effectiveness in accomplishing daily Web browsing activities and adapting large variety of modern Web pages to suffice the interaction for people with motor impairment.PDFDOIBibTeX

- Kumar, C., Akbari, D., Menges, R., MacKenzie, S., and Staab, S. 2019. TouchGazePath: Multimodal Interaction with Touch and Gaze Path for Secure Yet Efficient PIN Entry. 2019 International Conference on Multimodal Interaction, ACM, 329–338.PDFDOIISBNVideoBibTeX

- Sengupta, K., Menges, R., Kumar, C., and Staab, S. 2019. Impact of Variable Positioning of Text Prediction in Gaze-based Text Entry. Proceedings of the 11th ACM Symposium on Eye Tracking Research & Applications, ACM, 74:1–74:9.PDFDOIISBNBibTeX

2018

- Lichtenberg, N., Menges, R., Ageev, V., et al. 2018. Analyzing Residue Surface Proximity to Interpret Molecular Dynamics. Computer Graphics Forum.DOIBibTeX

- Menges, R., Tamimi, H., Kumar, C., Walber, T., Schaefer, C., and Staab, S. 2018. Enhanced Representation of Web Pages for Usability Analysis with Eye Tracking. Proceedings of the 2018 ACM Symposium on Eye Tracking Research & Applications, ACM, 18:1–18:9.Eye tracking as a tool to quantify user attention plays a major role in research and application design. For Web page usability, it has become a prominent measure to assess which sections of a Web page are read, glanced or skipped. Such assessments primarily depend on the mapping of gaze data to a Web page representation. However, current representation methods, a virtual screenshot of the Web page or a video recording of the complete interaction session, suffer either from accuracy or scalability issues. We present a method that identifies fixed elements on Web pages and combines user viewport screenshots in relation to fixed elements for an enhanced representation of the page. We conducted an experiment with 10 participants and the results signify that analysis with our method is more efficient than a video recording, which is an essential criterion for large scale Web studies.Best Video Award (for accompanying video)PDFDOIISBNVideoSlidesBibTeX

- Sengupta, K., Ke, M., Menges, R., Kumar, C., and Staab, S. 2018. Hands-free Web Browsing: Enriching the User Experience with Gaze and Voice Modality. Proceedings of the 2018 ACM Symposium on Eye Tracking Research & Applications, ACM, 88:1–88:3.PDFDOIISBNVideoBibTeX

2017

- Kumar, C., Menges, R., and Staab, S. 2017. Assessing the Usability of Gaze-Adapted Interface against Conventional Eye-Based Input Emulation. 2017 IEEE 30th International Symposium on Computer-Based Medical Systems (CBMS), IEEE, 793–798.PDFDOIVideoSlidesBibTeX

- Sengupta, K., Sun, J., Menges, R., Kumar, C., and Staab, S. 2017. Analyzing the Impact of Cognitive Load in Evaluating Gaze-Based Typing. 2017 IEEE 30th International Symposium on Computer-Based Medical Systems (CBMS), IEEE, 787–792.Best Student Paper AwardPDFDOIBibTeX

- Kumar, C., Menges, R., Müller, D., and Staab, S. 2017. Chromium Based Framework to Include Gaze Interaction in Web Browser. Proceedings of the 26th International Conference on World Wide Web Companion, International World Wide Web Conferences Steering Committee, 219–223.Honourable MentionPDFDOIISBNBibTeX

- Menges, R., Kumar, C., Müller, D., and Sengupta, K. 2017. GazeTheWeb: A Gaze-Controlled Web Browser. Proceedings of the 14th Web for All Conference on The Future of Accessible Work, ACM, 25:1–25:2.Judges Award at TPG Accessibility ChallengePDFDOIISBNVideoBibTeX

- Menges, R., Kumar, C., Wechselberger, U., Schaefer, C., Walber, T., and Staab, S. 2017. Schau genau! A Gaze-Controlled 3D Game for Entertainment and Education. Journal of Eye Movement Research, 220.PDFVideoBibTeX

- Sengupta, K., Menges, R., Kumar, C., and Staab, S. 2017. GazeTheKey: Interactive Keys to Integrate Word Predictions for Gaze-based Text Entry. Proceedings of the 22Nd International Conference on Intelligent User Interfaces Companion, ACM, 121–124.PDFDOIISBNVideoBibTeX

2016

- Kumar, C., Menges, R., and Staab, S. 2016. Eye-Controlled Interfaces for Multimedia Interaction. IEEE MultiMedia 23, 4, 6–13.DOIBibTeX

- Menges, R., Kumar, C., Sengupta, K., and Staab, S. 2016. eyeGUI: A Novel Framework for Eye-Controlled User Interfaces. Proceedings of the 9th Nordic Conference on Human-Computer Interaction, ACM, 121:1–121:6.PDFDOIISBNVideoBibTeX